How to Build & Publish a Terraform Provider

Documenting the critical and secondary dependencies to building and publishing a Terraform provider to the Terraform registry, based on my recent experience.

I’m going to go out on a limb and say many of us have had a similar experience with Terraform provider development:

“I tried briefly once before and totally gave up”

The barrier to initial entry is quite high but it doesn’t need to be. Hopefully my steps can save others some time.

Backstory#

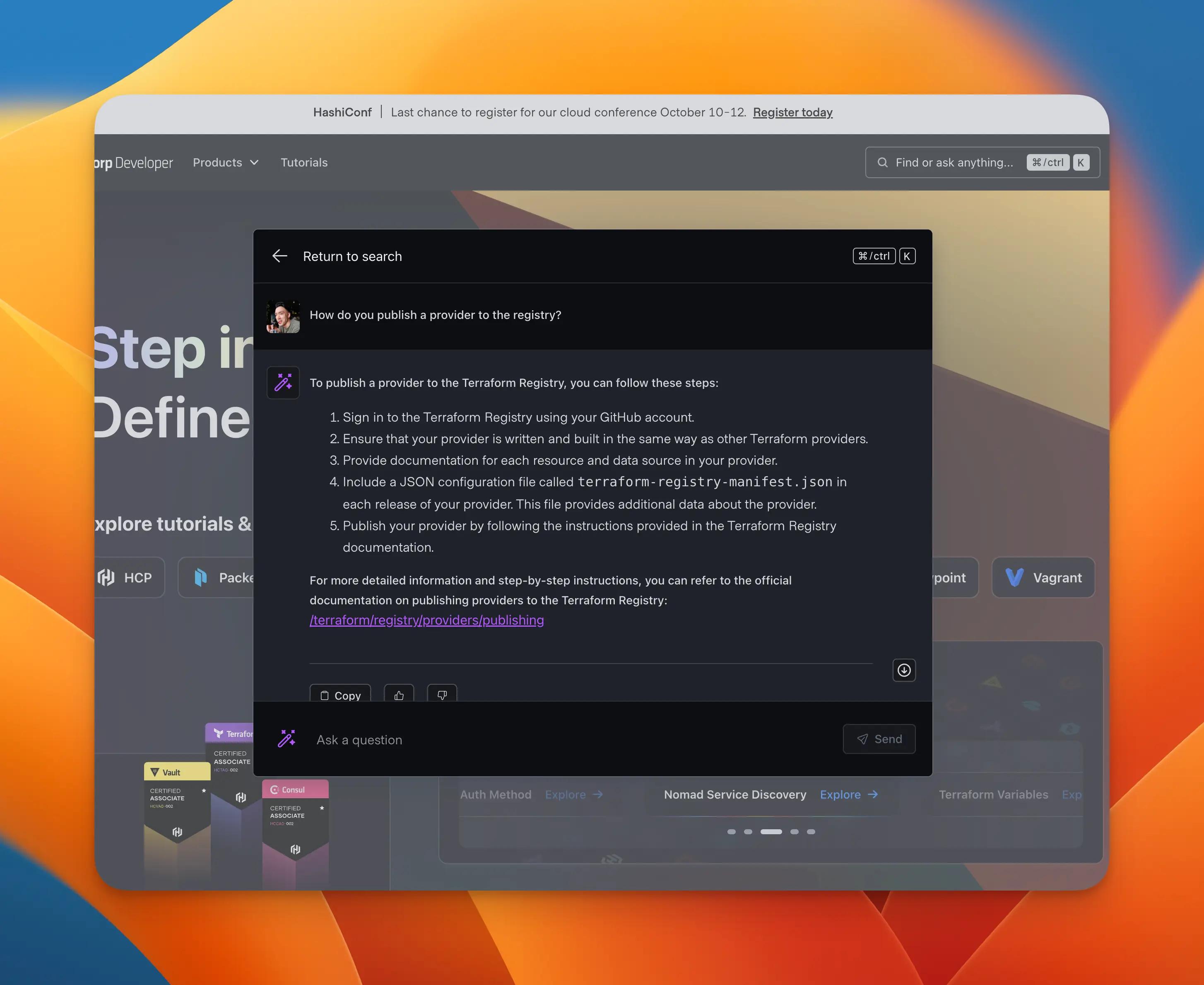

Yesterday, October 4, 2023, was my last day at HashiCorp after a great two year run on the awesome Web Presence team. Prior to my departure, I shipped “Developer AI” as a closed beta. This is an LLM-powered Q&A interface with retrieval augmented generation (RAG).

The backend API that powers this widget has two datastores. The first is a PostgreSQL database for storing messages in a tree-like structure. (This is an arbitrary implementation detail, based on what I could glean from the experience on chat.openai.com). The second datastore is a Pinecone vector database, populated with OpenAI GPT3.5 vector embeddings, created from HashiCorp product docs, like Terraform and Consul.1

In preparing the project for effectively maintenance mode after my departure, I wanted to make sure that all infrastructure — AWS, Azure OpenAI, and Pinecone — was captured in infrastructure as code (IAC) with Terraform.

The AWS parts constituted 95% of the infrastructure, and was easy to capture as it was already familiar. However, Pinecone was a new service, and no “Official” or “Partner” Terraform provider existed, so by pragmatic choice, that IAC effort was tabled.

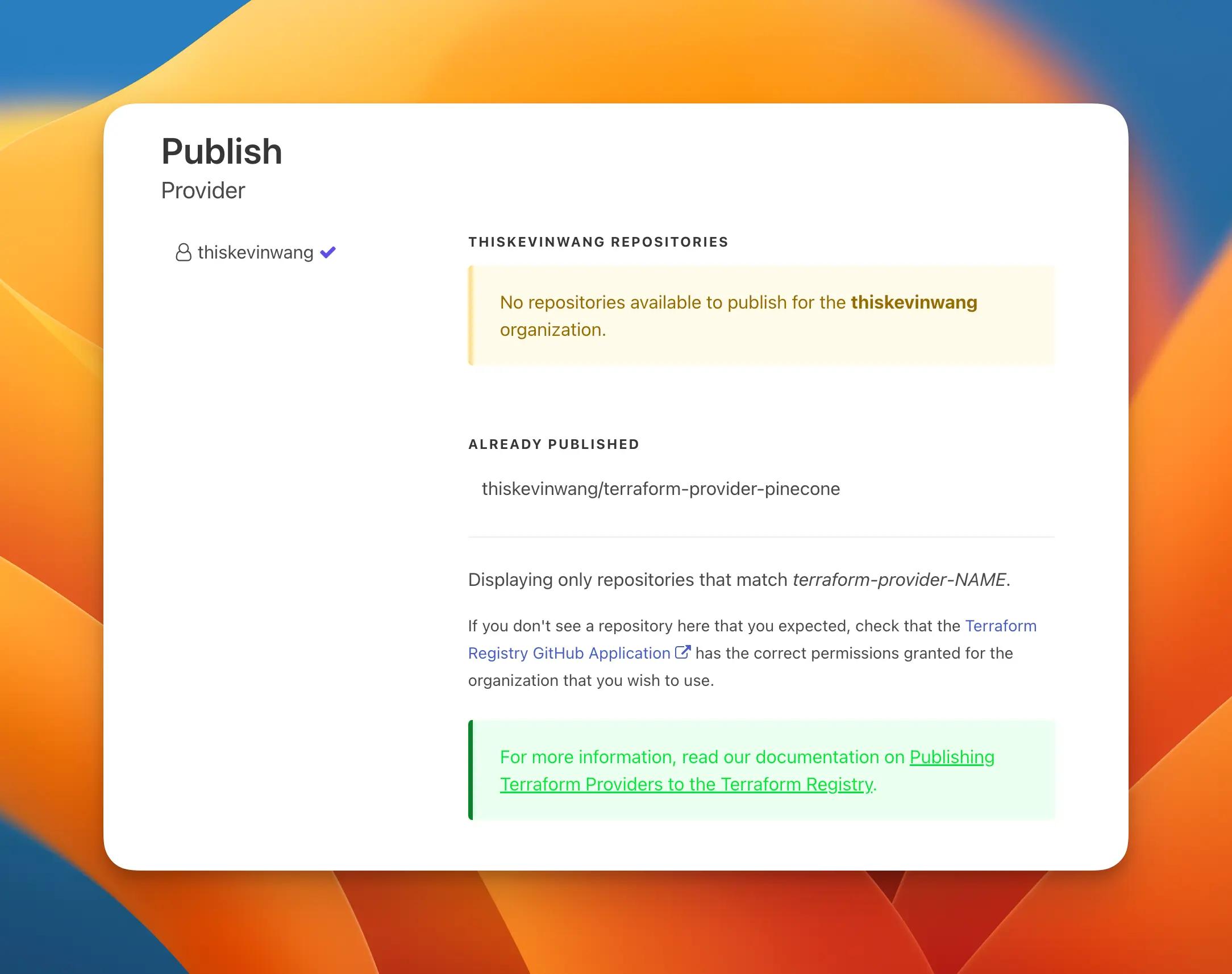

On my last 24 hours, I started cranking out my first provider... and published it to the registry just today! I’d say it took me a collective 12-18 hours of development to get a minimum viable product.

Tip

You can check out the source code at github.com/thiskevinwang/terraform-provider-pinecone. Note: All the codeblock filepaths in the rest of this document will reference the structure of this repository.

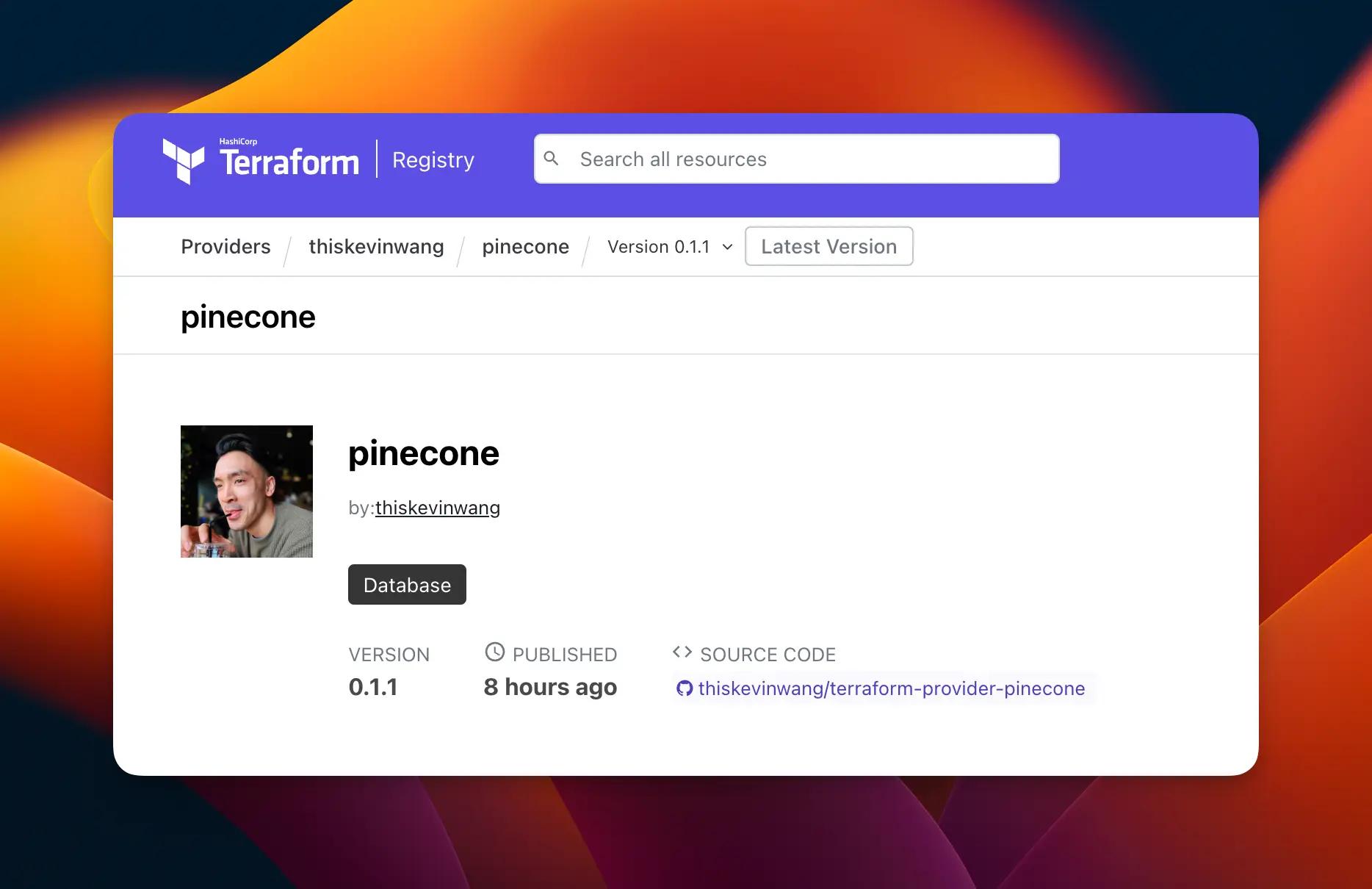

The registry page is at registry.terraform.io/providers/thiskevinwang/pinecone

Anyways, on to the business...

Critical#

The process of building a Terraform provider is not too bad! But the end to end experience is strewn with numerous paper cuts and the information is presented in a fragmented manner, making for a subpar development experience.

Here is a rough collection of what I felt were critical pieces worth documenting, in a unified and ordered view. This is biased towards the publishing process, rather than the development process, because:

”If it’s not deployed, it doesn’t exist.”

Don’t you agree?

Feedback loop#

Note

All the following writing is predicated on the assumption that there is some semblance of Terraform provider code. The Terraform Provider Scaffolding Framework is a great starting point, though I chose to start from zero.

A quick feedback loop is critical to any sane development process.

Setting this up for terraform provider development enables running

terraform plan, apply, destroy locally, to verify each code change.

From here on, the writing will take a very much “do this, then do that” tone, so bear with me!

Update ~/.terraformrc with the following. The key in the dev_overrides stanza

is mostly arbitrary, but will be referenced in Terraform HCL code.2

And the usage in Terraform code that you want to test locally looks like:

For me, testing each source code change involved two terminal windows:

- Reinstall the provider binary

$ ~/terraform-provider-pinecone

- Run a

terraformcommand of choice$ ~/terraform-provider-pinecone/examples/basic

Documentation generation#

Documentation is technically optional and doesn’t prevent a provider from making it to the registry, but I consider it a critical requirement for any trusted provider.

The designated tool for this is tfplugindocs. 3

How do you use it?

Add /tools/tools.go to the provider project.

And run the following commands to verify installation, and generate docs:

Running tfplugindocs will generate a tree of docs based on the go code

written. Take note of fields like

(schema.Schema).MarkdownDescription.

For example:

These docs will ultimately be rendered on the registry in a matching tree format.

Releasing#

Here’s my distilled overview of the release process, which was not necessarily a code-heavy part, but involved many moving parts.

Valid repository name#

The GitHub repository name must adhere to terraform-provider-{lowercase} in

order for Terraform Registry to accept it. 4

Warning

This is honestly worth mentioning as the very first order of business.

Add a manifest#

Add a terraform-registry-manifest.json file to the project root.

This is a special file that the registry looks for in each release.

I used the

Terraform Plugin Framework

so protocol_versions is set to 6.0. 5

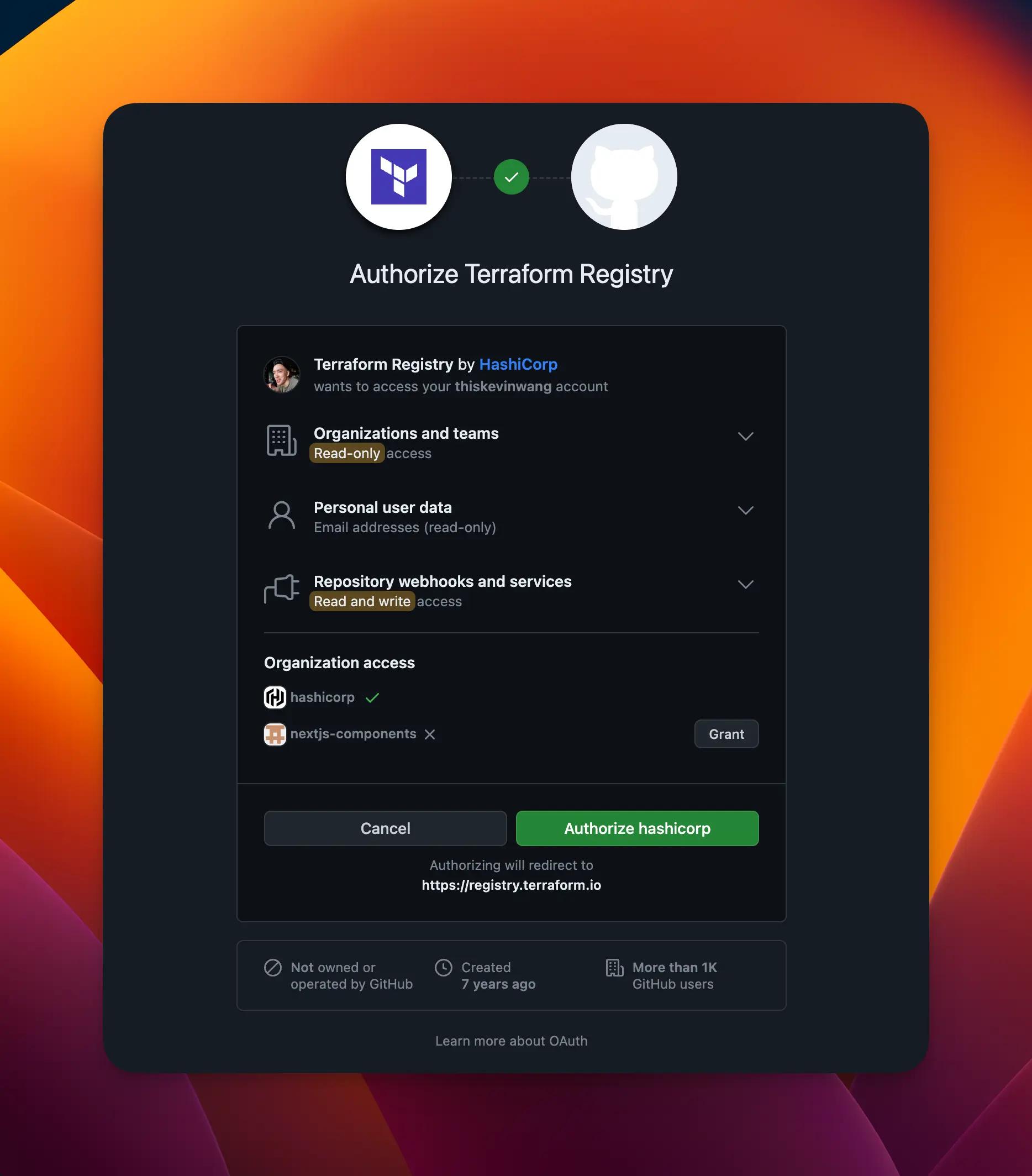

Registry authorization#

These are a series of mostly one-time, web-based steps to authorize the registry to detect your provider and read its releases.

Sign in to the Terraform Registry and authorize the GitHub app.

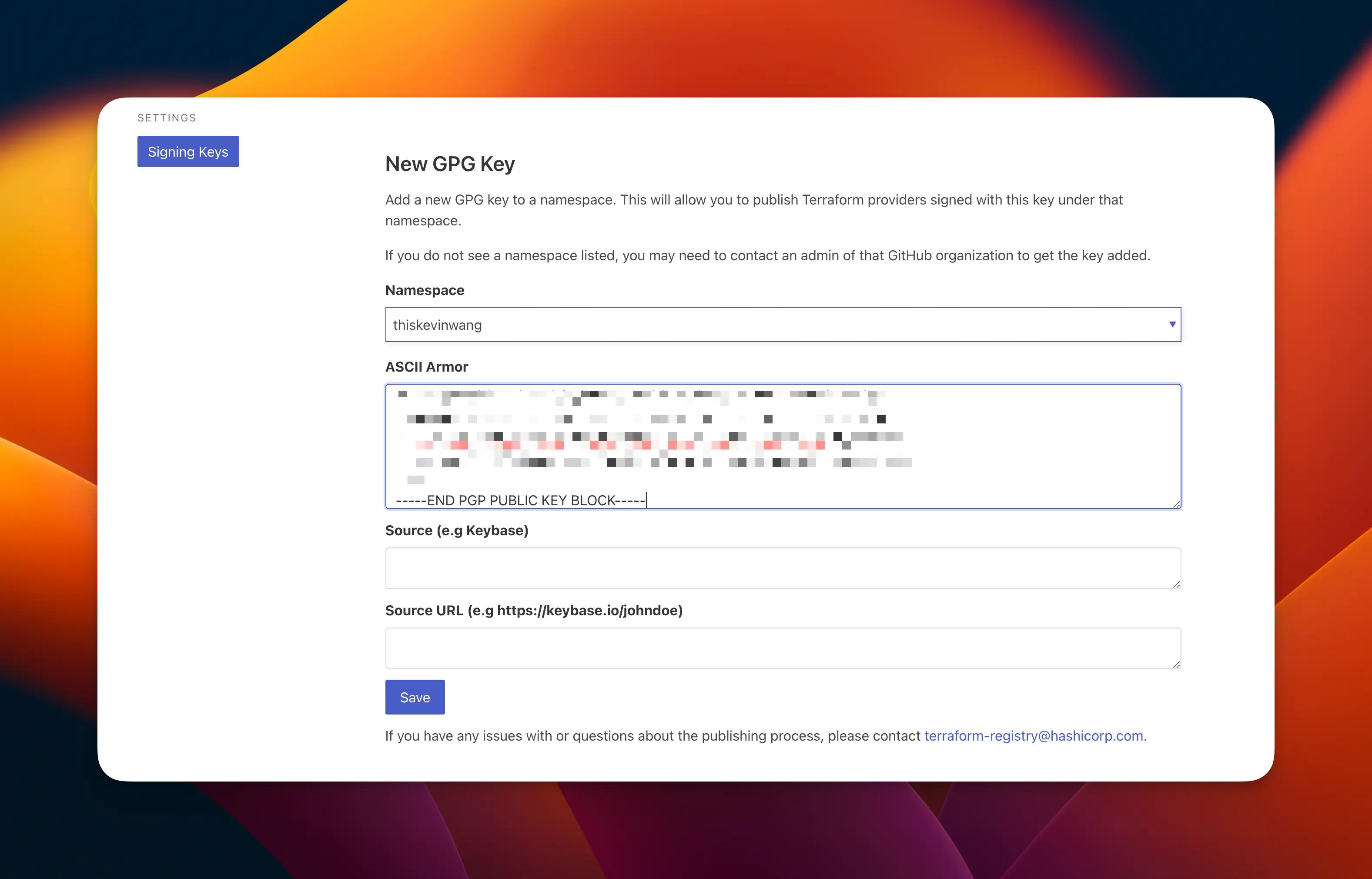

Get a GPG key from the following command and add it to the Signing Keys view.

GitHub release#

At this point, Terraform Registry should be able to auto detect the provider, based on the name, and read its GitHub releases for expected artifacts.

(No releases should exist yet though.)

Of the few options listed on the publishing docs,

I chose using goreleaser locally since I had used it before,

and wanted to avoid the additional complexity of say, working with a remote CI environment.

Add .goreleaser.yml

Copy this file from terraform-provider-scaffolding-framework

Then create and push a tag, and run goreleaser.

Debugging

If there's a error about GPG_FINGERPRINT not being set, run the following

command to retrieve and set it.

Note

I’m not a security expert, so do not take these GPG-related steps as scripture. Refer to Signing Key steps if anything seems off!

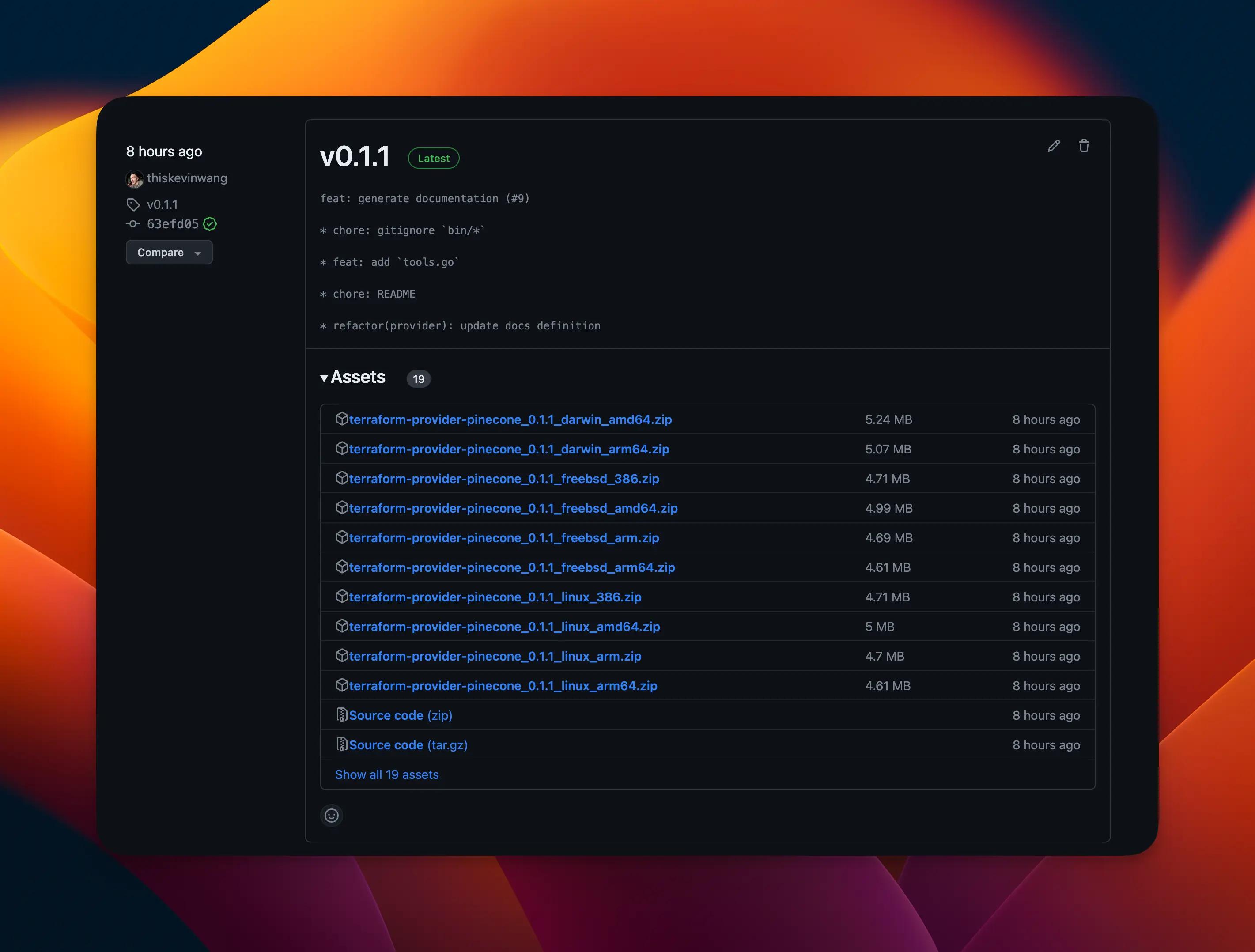

goreleaser should create a new GitHub release with various artifacts included.

The Terraform Registry should detect this new release and create a new version — like magic.

That’s it!

This marks the end of the release process of all the critical steps that I remember.

Secondary#

Ok, broad strokes aside, here are some other, more lower level issues that involved non-trivial effort. Not critical but still worth sharing for future reference.

Scaffolding#

There are two GitHub repositories that serve as standards for copy-paste — every developer’s absolute best friend.

-

Terraform Provider HashiCups PF — This repository is nearly identical to the one above, but is intended to accompany a HashiCorp tutorial. I personally did not find this tutorial worth going through. The source code is an OK reference though, and just a slightly more realistic representation of a provider than the base scaffolding repository. Ignore the

docker-composeparts and use a real external service instead!

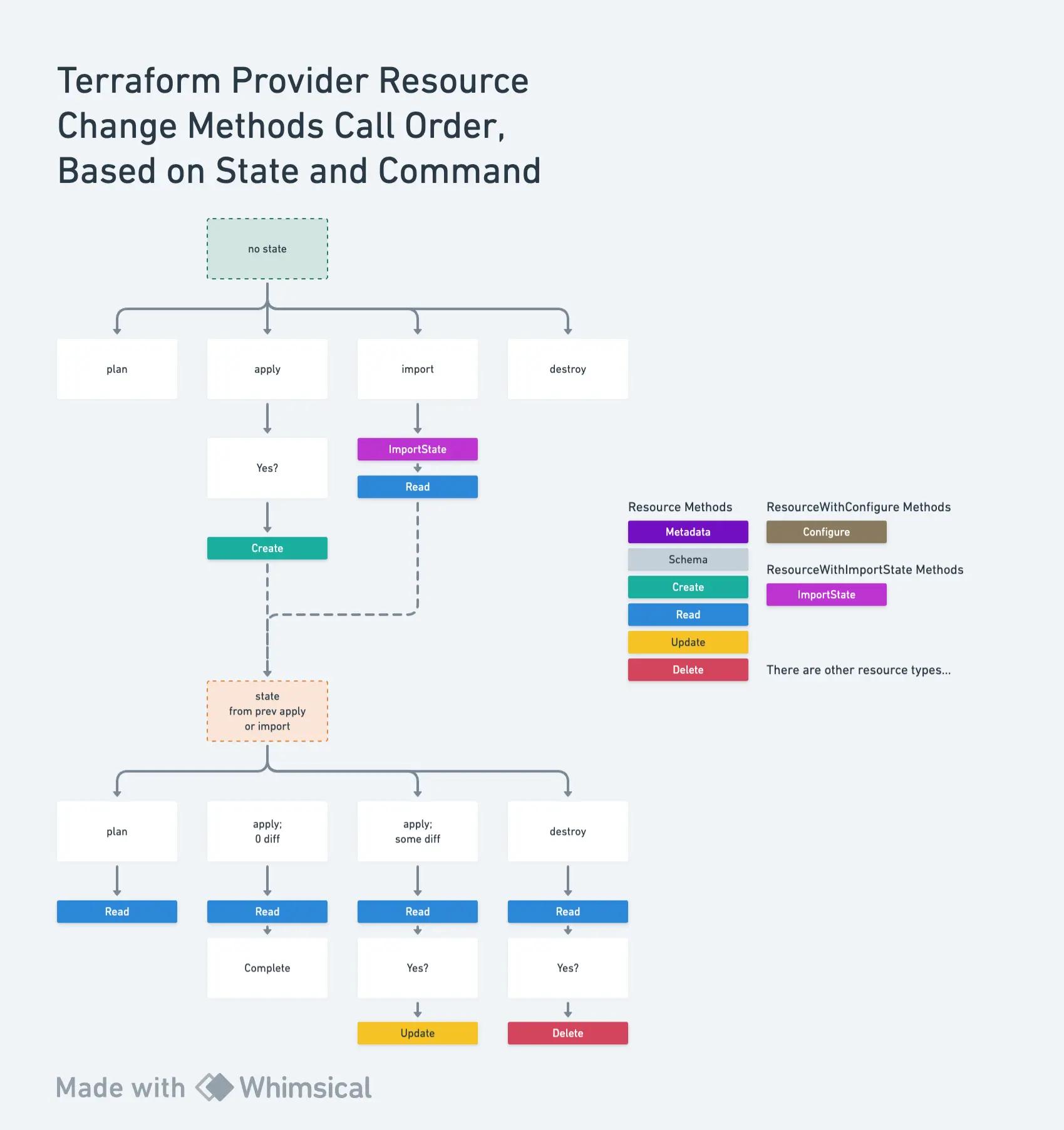

Lifecycle of a resource#

Reasoning about the lifecycle of a resource for the first time is pretty daunting since there are so many permutations of commands and state.

And because it’s not super clear what the implicated code methods being called are, development can feel quite opaque or very much like trial-and-error.

There are a few very helpful pages that are either not on https://developer.hashicorp.com or are simply hidden behind the complex navigation.

I shipped a project called "Versioned Docs", aka "Distributed Remote Docs" for https://developer.hashicorp.com — a project of epic proportions 👦🏼👦🏻 — and I have the curse of knowledge of knowing where to look to sidestep the complex information architecture. 😅

This is even more compounded with the fact that I shipped the AI chat Q&A feature, which sidesteps the information architecture even more by retrieving document information from a Vector database. 🤪

I painstakingly ran multiple permutations of plan, apply, import, and destroy with a state and no state, and picking out log statements I added, in order to get a firsthand look at the exact call order of various resource methods.

This is what I gathered:

And my approach behind this was:

-

Add log statements to the top of each method. For example,

~/terraform-provider-pinecone/internal/resources/index.go -

Run terraform with JSON logging. 6

$ ~/terraform-provider-pinecone/examples/basic -

Meticulously comb through the logs with the help of a regular expression like,

"indexResource.[a-z]+".+tf_rpc.$ ~/terraform-provider-pinecone/examples/basic -

Diagram my findings.

Tactics#

For a sane provider development experience, pick a service with fully spec’d API docs, like Pinecone. Provider development is essentially a ton of CRUD operations, so having a clear understanding of the target API is very important.

Start with a Data Source. Data Sources are read-only and significantly easier to get to a working state than with a Resource.

The rest is Go#

After all this, the rest of the provider development gauntlet is just writing Go code. Being able to connect the dots between a Terraform HCL configuration and the provider code is plenty enough to achieve a minimal, working provider. I will acknowledge that this is not actually easy by any means, but it is not worth expanding on here.

A simple heuristic for starting to understand how a Terraform provider’s code works is to view the source code of a simple provider...

Oh hey, I have one of those...terraform-provider-pinecone 🥳.

Footnotes#

-

See Pinecone & OpenAI if you are unfamiliar with these services. ↩

-

More detailed docs on setting

~/.terraformrcat: Local provider install ↩ -

Installation instructions for terraform-plugin-docs were dated, so I opened a pull-request to improve them: hashicorp/terraform-plugin-docs #289 ↩

-

See the warning from https://developer.hashicorp.com/terraform/registry/providers/publishing. This warning needs to be more prominent. ↩

-

Terraform registry manifest file: https://developer.hashicorp.com/terraform/registry/providers/publishing#terraform-registry-manifest-file ↩

-

A short documentation page that lists varioua log levels and options - https://developer.hashicorp.com/terraform/internals/debugging ↩